So today I’d been planning to write about a new paper from our lab, just out in Neuropsychologia, in which we show how people without synaesthesia can be trained, over a few weeks, to have synaesthesia-like experiences – and that this training induces noticeable changes in their brains. It’s interesting stuff, and I will write about it later, but this morning I happened to read a recent piece by Olivia Goldhill in Quartz with the provocative title: “The idea that everything from spoons to stones are conscious is gaining academic credibility” (Quartz, Jan 27, 2018). This article had come up in a twitter discussion involving my colleague and friend Hakwan Lau about the challenge of maintaining the academic credibility of consciousness science, with Hakwan noting that provocative articles like this don’t often get the pushback they deserve.

So here’s some pushback.

Goldhill’s article is about panpsychism, which is the idea that consciousness is a fundamental property of the universe, present to some degree everywhere and in everything. Her article suggests that this view is becoming increasingly acceptable and accepted in academic circles, as so-called ‘traditional’ approaches (materialism and dualism) continue to struggle. On the contrary, although it’s true that panpsychism is being discussed more frequently and more openly these days, it remains very much a fringe proposition within consciousness science and is not taken seriously by many. Nor need it be, since consciousness science is getting along just fine without it. Let me explain how.

From hard problems to real problems

We should start with philosophy. Goldhill correctly identifies David Chalmers’ famous ‘hard problem of consciousness‘ as a key origin of modern panpsychism. This is bolstered by Chalmers’ own increasing apparent sympathy with this view, as Goldhill’s article makes clear. Put simply, the ‘hard problem’ is about how and why physical interactions of any sort can give rise to conscious experiences. This is indeed a difficult problem, and the apparent unavailability of any current solution is why those who fixate on it might be tempted by the elixir of panpsychism: if consciousness is ‘here, there, and everywhere‘ then there is no longer any hard problem to be solved.

But consciousness science has largely moved on from attempts to address the hard problem (though see IIT, below). This is not a failure, it’s a sign of maturity. Philosophically, the hard problem rests on conceivability arguments such as the possibility of imagining a philosophical ‘zombie’ – a behaviourally and perhaps physically identical version of me, or you, but which lacks any conscious experience, which has no inner universe. Conceivability arguments are generally weak since they often rest on failures of imagination or knowledge, rather than on insights into necessity. For example: the more I know about aerodynamics, the less I can imagine a 787 Dreamliner flying backwards. It cannot be done and such a thing is only ‘conceivable’ through ignorance about how wings work.

In practice, scientists researching consciousness are not spending their time (or their scarce grant money) worrying about conscious spoons, they are getting on with the job of mapping mechanistic properties (of brains, bodies, and environments) onto properties of consciousness. These properties can be described in many different ways, but include – for example – differences between normal wakeful awareness and general anaesthesia; experiences of identifying with and owning a particular body, or distinctions between conscious and unconscious visual perception. If you come to the primary academic meeting on consciousness science – the annual meeting of the Association for the Scientific Study of Consciousness (ASSC) – or read articles either in specialist journals like Neuroscience of Consciousness (I edit this, other journals are available) or in the general academic literature, you’ll find a wealth of work like this and very little – almost nothing – on panpsychism. You’ll find debates on the best way to test whether prefrontal cortex is involved in visual metacognition – but you won’t find any experiments on whether stones are aware. This, again, is maturity, not stagnation. It is also worth pointing out that consciousness science is having increasing impact in medicine, whether through improved methods for detecting residual awareness following brain injury, or via enhanced understanding of the mechanisms underlying psychiatric illness. Thinking about conscious spoons just doesn’t cut it in this regard.

A standard objection at this point is that empirical work touted as being about consciousness science is often about something else: perhaps memory, attention, or visual perception. Yes, some work in consciousness science may be criticized this way, but it is not generally the case. To the extent that the explanatory target of a study encompasses phenomenological properties, or differences between conscious states (e.g., dreamless sleep versus wakeful rest), it is about consciousness. And of course, consciousness is not independent of other cognitive and perceptual processes – so empirical work that focuses on visual perception can be relevant to consciousness even if it does not explicitly contrast conscious and unconscious states.

The next objection goes like this: OK, you may be able to account for properties of consciousness in terms of underlying mechanisms, but this is never going to explain why consciousness is part of the universe in the first place – it is never going to solve the hard problem. Therefore consciousness science is failing. There are two responses to this.

First, wait and see (and ideally do). By building increasingly sophisticated bridges between mechanism and phenomenology, the apparent mystery of the hard problem may dissolve. Certainly, if we stick with simplistic ‘explanations’ – for instance by associating consciousness simply with activity in (for example) the prefrontal cortex, everything may remain mysterious. But if we can explain (for example) the phenomenology of peripheral vision in terms of neurally-encoded predictions of expected visual uncertainty, perhaps we are getting somewhere. It is unwise to pronounce the insufficiency of mechanistic accounts of some putatively mysterious phenomenon before such mechanistic accounts have been fully developed. This is one reason why frameworks like predictive processing are exciting – they provide explanatorily powerful, computationally explicit, and empirically predictive concepts which can help link phenomenology and mechanism. Such concepts can help move beyond correlation towards explanation in consciousness science, and as we move further along this road the hard problem may lose its lustre.

Second, people often seem to expect more from a science of consciousness than they would ask of other scientific explanations. As long as we can formulate explanatorily rich relations between physical mechanisms and phenomenological properties, and as long as these relations generate empirically testable predictions which stand up in the lab (and in the wild), we are doing just fine. Riding behind many criticisms of current consciousness science are unstated intuitions that a mechanistic account of consciousness should be somehow intuitively satisfying, or even that it must allow some kind of instantiation of consciousness in an arbitrary machine. We don’t make these requirements in other areas of science, and indeed the very fact that we instantiate phenomenological properties ourselves, might mean that a scientifically satisfactory account of consciousness will never generate the intuitive sensation of ‘ah yes, this is right, it has to be this way’. (Thomas Metzinger makes this point nicely in a recent conversation with Sam Harris.)

Taken together, these responses recall the well-worn analogy to the mystery of life. Not so long ago, scientists thought that the property of ‘being alive’ could never be explained by physics or chemistry. That life had to be more than mere ‘mechanism’. But as biologists got on with the job of accounting for the properties of life in terms of physics and chemistry, the basic mystery of the ontological status of life faded away and people no longer felt the need to appeal to vitalistic concepts like ‘elan vital’. Now of course this analogy is imperfect, and from our current vantage it is impossible to say how closely it will stand up over time. Consciousness and life are not the same (though they may be more closely linked than people tend to think – another story!). But the basic point remains: instead of focusing on a possibly illusory big mystery – and thereby falling for the temptations of easy big solutions like panpsychism – the best strategy is to divide and conquer. Identify properties and account for them, and repeat. Chalmers’ himself describes something like this strategy when he talks about the ‘mapping problem’, and with tongue-somewhat-in-cheek I’ve called it ‘the real problem of consciousness‘.

The lure of integrated information theory

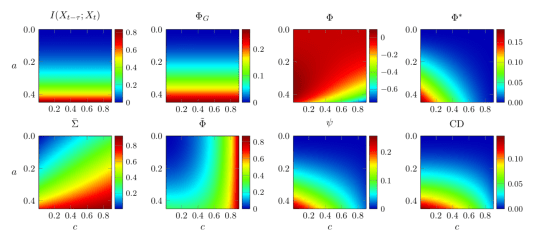

A major boost for modern panpsychism has come from Giulio Tononi’s much discussed – and fascinating – integrated information theory of consciousness (IIT). This is a formal mathematical theory which attempts to derive constraints on the mechanisms of consciousness from axioms about phenomenology. It’s a complex theory (and apparently getting more complex all the time) but the relevance for panpsychism is straightforward. On IIT, any mechanism that integrates information in the right way exhibits consciousness to some degree. And the ability to integrate information is very general, since it depends on only the cause-effect structure of a system.

Tononi actually goes further than this, in a crucial but subtle way. For him, the (integrated) information that counts is based not only what a system has done (ie., what states it has been in), but on what a system could do (i.e., what states it could be in, even if has never or will never occupy these states). Technically, this is the difference between the empirical distribution of a system and its maximum entropy distribution. This feature of IIT not only makes it hard (usually impossible) to calculate for nontrivial systems, it pushes further towards panpsychism because it implies an ontological status for certain forms of information – much like John Wheeler’s ‘it from bit‘. If (integrated) information is real (and therefore more-or-less everywhere), and if consciousness is based on (integrated) information, then consciousness is also more-or-less everywhere, thus panpsychism.

But this is not the only way to formulate IIT. Several years ago, Adam Barrett and I formulated a measure of integrated information which depends only on the empirical distribution of a system, and now many competing measures exist. These measures can be applied more easily in practice, and they do not directly imply panpsychism because they can be interpreted as explanatory bridges between mechanism and phenomenology (in the ‘real problem’ sense), rather than as claims about what consciousness actually is. So when Goldhill writes that IIT “shares the panpsychist view that physical matter has innate conscious experience” this is only true for the strong version of the theory articulated by Tononi himself. Other views are possible, and more empirically productive.

Back to science

This leads us to the main problem with panpsychism. It’s not that it sounds crazy, it’s that it cannot be tested. It does not lead to any feasible programme of experimentation. Progress in scientific understanding requires experiments and testability. Given this, it’s curious that Goldhill introduces us to Arthur Eddington, the physicist who experimentally confirmed Einstein’s (totally crazy-sounding) theory of general relativity. Eddington’s immense contribution to experimental physics should not give credence to his views on panpsychism, it should instead remind us of the essential imperative of formulating testable theories, however difficult such tests might be to carry out. (Modern physics is of course now facing a similar testability crisis with string theory.) And outlandish speculations about how quantum entanglement might lead to universe-wide consciousness have no place whatsoever in a rigorous and empirically grounded science of consciousness.

I can’t finish this post without noting that the current attention to panpsychism, especially in the media, has a lot to do with the views of some particularly influential figures in the field: Chalmers and Tononi, but also Christof Koch, whose early work with Francis Crick was fundamental in the rehabilitation of consciousness science in the late 1990s and who continues to be a major figure in the field. These people are all incredibly smart and have made extremely important contributions within consciousness science and beyond. I have learned a great deal from each, and I owe them intellectual debts I will never be able to repay. Having said that, their views on panpsychism are firmly in the minority and should not be over-weighted simply because of their historical contributions and current prominence. Whether there is something about having made such influential contributions that leads to a tendency to adopt countercultural (and difficult to test) views later on – well that’s for another day and another writer.

At the end of her piece, Goldhill quotes Chalmers quoting the philosopher John Perry who says: “If you think about consciousness long enough, you either become a panpsychist or you go into administration.” Perhaps the problem lies in only thinking. We should instead complement only thinking with the challenging empirical work of explaining properties of consciousness in terms of biophysical mechanisms. Then we can say: If you work on consciousness long enough, you either become a neuroscientist or you become a panpsychist. I know where I’d rather be – with my many colleagues who are not worrying about conscious spoons but who are trying, and little-by-little succeeding, in unravelling the complex biophysical mechanisms that shape our subjective experiences of world and self. And now it’s high time I got back to that paper on training synaesthesia.

(For more general discussions about consciousness science, where it’s at and where we’re going, have a listen to my recent conversation with Sam Harris. Make sure you have time for it though, it clocks in at over three hours …)