For thousands of years people have wondered about the mystery of consciousness. How can anything made of physical stuff – a brain, for instance – be identical to, or give rise to, a subjective experience? Despite a revival in the … Continue reading

For thousands of years people have wondered about the mystery of consciousness. How can anything made of physical stuff – a brain, for instance – be identical to, or give rise to, a subjective experience? Despite a revival in the … Continue reading

What’s up with the rubber hand illusion? Here we clarify how our recent studies should be interpreted, and what they mean for experimental studies of embodiment. Continue reading

I was in Dublin last week, for the biannual meeting of the British Neuroscience Association. Amid the usual buzz of new research findings – and an outstanding public outreach programme – something different was in the air. There is now an unstoppable momentum behind efforts to increase the credibility of research in psychology and neuroscience (and in other areas of science too) and this momentum was fully on show at the BNA. There was a ‘credibility zone’, nestled among the usual mess of posters and bookstalls, and a keynote lecture from Professor Uta Frith on the three ‘R’s and what they mean for neuroscientists: reproducibility, replicability and reliability of research. The BNA itself has recently received £450K from the Gatsby Foundation to support a new ‘credibility in neuroscience programme’. Science can only progress when we can trust its findings, and while outright fraud is rare, the implicit demands of the ‘publish or perish’ culture can easily lead to unreliable results, as various replication crises have amply revealed. Measures to counter these dangers are therefore more than welcome – they are necessary.

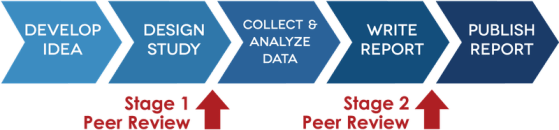

This is why I’m delighted that Neuroscience of Consciousness, part of the Oxford University Press family of journals, is now accepting Registered Report submissions. Registered Reports are a form of research article in which the methods and proposed analyses are written up and reviewed before the research is actually conducted. Typically, and as implemented in our journal, a ‘stage 1’ submission includes a detailed description of the study protocol. If this stage 1 submission is accepted after peer-review, then a stage 2 submission can be submitted which includes the results and discussion. The key innovation of a Registered Report is that acceptance at stage 1 guarantees publication at stage 2, whichever way the results actually turn out – so long as the protocol specified at stage 1 has been properly followed. Also important is that RRs do not exclude exploratory analyses – they only require that such analyses are clearly flagged up. Of course, not all research will be suitable for the registered report format, but we do encourage researchers to use it whenever they can. I’m very pleased that we have a dedicated member of our editorial board, Professor Zoltan Dienes, who will handle Registered Report submissions and who can advise on the process.

Registered Reports are just one among many innovations aimed at improving the credibility of research. Another important development is the emphasis on pre-registration of research designs, so that planned analyses can be unambiguously separated from exploratory analyses. This may be suitable in many cases when a full Registered Report is not. Neuroscience of Consciousness strongly encourages all experimental studies, wherever possible, to be pre-registered. This can be quite easy to do with facilities like Open Science Framework and aspredicted.org. Better science can also be catalysed through publication of methods and resources papers, including datasets. Here again I’m delighted that Neuroscience of Consciousness has launched a new submission category – ‘methods and resources’ articles – to encourage this kind of work.

As many have already emphasized, this emerging ‘open science’ research culture is not about calling people out or being holier-than-thou. Like many others, I’ve faced my own challenges in getting to grips with this rapidly evolving landscape, these challenges will no doubt continue, and it’s been uncomfortable contemplating some work I’ve led or been involved with in the past. Collectively, though, we have a duty to improve our practice and deliver not only more robust results but also more robust methodologies for advancing scientific understanding. My own laboratory embraced an explicit open science policy several months ago, setting out heuristics for best practice across a number of different research methodologies. This policy came primarily from discussions among the researchers, rather than ‘top down’ from me as the overall lab head, and I’m grateful that it did. One thing that’s become clear is that lab heads and research group leaders would do well to reflect on their expectations of research fellows and graduate students. One well designed pre-registered (ideally registered report) publication is worth n interesting-but-underpowered studies (choose your n). It goes without saying that these changed expectations must also filter through to funding bodies and appointment committees. I am confident that they will.

I prefer to think of these new developments in research practice and methodology as an exciting new opportunity, rather than as a scrambled response to a perceived crisis. And I’m greatly looking forward to seeing the first Registered Report appear in Neuroscience of Consciousness. Whichever way the results turn out.

*

(Many thanks to Chris Chambers for his advice and encouragement in setting up a Registered Report pipeline, to Rosie Chambers and Lucy Oates at OUP for making it happen, to Zoltan Dienes for agreeing to handle Registered Report submissions editorially, and to Warrick Roseboom, Peter Lush, Bence Palfi, Reny Baykova, and Maxine Sherman for leading open science discussions in our lab.)

You press a light switch and the light comes on. What could be simpler than that. But notice something. As the light comes on, you probably have a feeling that, somehow, you caused that to happen. This experience of ‘being … Continue reading

This gallery contains 5 photos.

This is a Guest Blog written by Peter Lush, postdoctoral research at the Sackler Centre for Consciousness Science, and lead author on this new study. It’s all about our new preprint. A key challenge for psychological research is how to … Continue reading

Salvador Dali, The Persistence of Memory, 1931

Our new paper, led by Warrick Roseboom, is out now (open access) in Nature Communications. It’s about time.

More than two thousand years ago, though who knows how long exactly, Saint Augustine complained “What then is time? If no-one asks me, I know; if I wish to explain to one who asks, I know not.”

The nature of time is endlessly mysterious, in philosophy, in physics, and also in neuroscience. We experience the flow of time, we perceive events as being ordered in time and as having particular durations, yet there are no time sensors in the brain. The eye has rod and cone cells to detect light, the ear has hair cells to detect sound, but there are no dedicated ‘time receptors’ to be found anywhere. How, then, does the brain create the subjective sense of time passing?

Most neuroscientific models of time perception rely on some kind internal timekeeper or pacemaker, a putative ‘clock in the head’ against which the flow of events can be measured. But despite considerable research, clear evidence for these neuronal pacemakers has been rather lacking, especially when it comes to psychologically relevant timescales of a few seconds to minutes.

An alternative view, and one with substantial psychological pedigree, is that time perception is driven by changes in other perceptual modalities. These modalities include vision and hearing, and possibly also internal modalities like interoception (the sense of the body ‘from within’). This is the view we set out to test in this new study, initiated by Warrick Roseboom here at the Sackler Centre, and Dave Bhowmik at Imperial College London, as part of the recently finished EU H2020 project TIMESTORM.

*

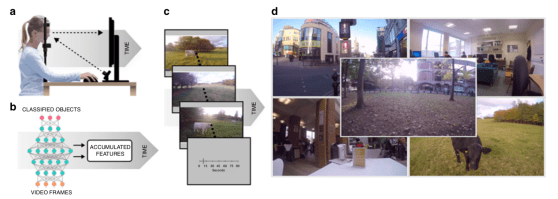

Their idea was that one specific aspect of time perception – duration estimation – is based on the rate of accumulation of salient events in other perceptual modalities. More salient changes, longer estimated durations. Fewer salient changes, shorter durations. He set out to test this idea using a neural network model of visual object classification modified to generate estimates of salient changes when exposed to natural videos of varying lengths (Figure 1).

Figure 1. Experiment design. Both human volunteers (a, with eye tracking) and a pretrained object classification neural network (b) view a series of natural videos of different lengths (c), recorded in different environments (d). Activity in the classification networks is analysed for frame-to-frame ‘salient changes’ and records of salient changes are used to train estimates of duration – based on the physical duration of the video. These estimates are then compared with human reports. We also compare networks trained on gaze-constrained video input versus ‘full frame’ video input.

We first collected several hundred videos of five different environments and chopped them into varying lengths from 1 sec to ~1 min. The environments were quiet office scenes, café scenes, busy city scenes, outdoor countryside scenes, and scenes from the campus of Sussex University. We then showed the videos to some human participants, who rated their apparent durations. We also collected eye tracking data while they viewed the videos. All in all we obtained over 4,000 duration ratings.

The behavioural data showed that people could do the task, and that – as expected – they underestimated long durations and overestimated short durations (Figure 2a). This ‘regression to the mean’ effect is known as Vierodt’s law in the time perception literature and is very well known. Our human volunteers also showed biases according to the video content, rating busy (e.g., city) scenes as lasting longer than non-busy (e.g., office) scenes of the same physical duration. This is just as expected, if duration estimation is based on accumulation of salient perceptual changes.

For the computational part, we used AlexNet, a pretrained deep convolutional neural network (DCNN) which has excellent object classification performance across 1,000 classes of object. We exposed AlexNet to each video, frame by frame. For each frame we examined activity in four separate layers of the network and compared it to the activity elicited by the previous frame. If the difference exceeded an adaptive threshold, we counted a ‘salient event’ and accumulated a unit of subjective time at that level. Finally, we used a simple machine learning tool (a support vector machine) to convert the record of salient events into an estimate of duration in seconds, in order to compare the model with human reports. There are two important things to note here. The first is that the system was trained on the physical duration of the videos, not on the human estimates (apparent durations). The second is that there is no reliance on any internal clock or pacemaker at all (the frame rate is arbitrary – changing it doesn’t make any difference).

Fig 2. Main results. Human volunteers can do the task and show characteristic biases (a). When the model is trained on ‘full-frame’ data it can also do the task, but the biases are even more severe (b). There is a much closer match to human data when the model input is constrained by human gaze data (c), but not when the gaze locations are drawn from different trials (d).

There were two key tests of the model. Was it able to perform the task? More importantly, did it reveal the same pattern of biases as shown by humans?

Figure 2(b) shows that the model indeed performed the task, classifying longer videos as longer than shorter videos. It also showed the same pattern of biases, though these were more exaggerated than for the human data (a). But – critically – when we constrained the video input to the model by where humans were looking, the match to human performance was incredibly close (c). (Importantly, this match went away if we used gaze locations from a different video, d). We also found that the model displayed a similar pattern of biases by content, rating busy scenes as lasting longer than non-busy scenes – just as our human volunteers did. Additional control experiments, described in the paper, rule out that these close matches could be achieved just by changes within the video image itself, or by other trivial dependencies (e.g., on frame rate, or on the support vector regression step).

Altogether, these data show that our clock-free model of time-perception, based on the dynamics of perceptual classification, provides a sufficient basis for capturing subjective duration estimation of visual scenes – scenes that vary in their content as well as in their duration. Our model works on a fully end-to-end basis, going all the way from natural video stimuli to duration estimation in seconds.

*

We think this work is important because it comprehensively illustrates an empirically adequate alternative to ‘pacemaker’ models of time perception.

Pacemaker models are undoubtedly intuitive and influential, but they raise the spectre of what Daniel Dennett has called the ‘fallacy of double transduction’. This is false idea that perceptual systems somehow need to re-instantiate a perceived property inside the head, in order for perception to work. Thus perceived redness might require something red-in-the-head, and perceived music might need a little band-in-the-head, together with a complicated system of intracranial microphones. Naturally no-one would explicitly sign up to this kind of theory, but it sometimes creeps in unannounced to theories that rely too heavily on representations of one kind or another. And it seems that proposing a ‘clock in the head’ for time perception provides a prime example of an implicit double transduction. Our model neatly avoids the fallacy, and as we say in our Conclusion:

“That our system produces human-like time estimates based on only natural video inputs, without any appeal to a pacemaker or clock-like mechanism, represents a substantial advance in building artificial systems with human-like temporal cognition, and presents a fresh opportunity to understand human perception and experience of time.” (p.7).

We’re now extending this line of work by obtaining neuroimaging (fMRI) data during the same task, so that we can compare the computational model activity against brain activity in human observers (with Maxine Sherman). We’ve also recorded a whole array of physiological signatures – such as heart-rate and eye-blink data – to see whether we can find any reliable physiological influences on duration estimation in this task. We can’t – and the preprint, with Marta Suarez-Pinilla – is here.

*

Major credit for this study to Warrick Roseboom who led the whole thing, with the able assistance of Zaferious Fountas and Kyriacos Nikiforou with the modelling. Major credit also to David Bhowmik who was heavily involved in the conception and early stages of the project, and also to Murray Shanahan who provided very helpful oversight. Thanks also to the EU H2020 TIMESTORM project which supported this project from start to finish. As always, I’d also like to thank the Dr. Mortimer and Theresa Sackler Foundation, and the Canadian Institute for Advanced Research, Azrieli Programme in Brain, Mind, and Consciousness, for their support.

*

Roseboom, W., Fountas, Z., Nikiforou, K., Bhowmik, D., Shanahan, M.P., and Seth, A.K. (2019). Activity in perceptual classification networks as a basis for human subjective time perception. Nature Communications. 10:269.

Our new paper on ‘measuring integrated information’ is out now, open access, in the journal Entropy. It’s part of a special issue dedicated to integrated information theory.

In consciousness research, ‘integrated information theory’, or IIT, has come to occupy a highly influential and rather controversial position. Acclaimed by some as the most important development in consciousness science so far, critiqued by others as too mathematically abstruse and empirically untestable, IIT is by turns both fascinating and frustrating. Certainly, a key challenge for IIT is to develop measures of ‘integrated information’ that can be usefully applied to actual data. These measures should capture, in empirically interesting and theoretically profound ways, the extent to which ‘a system generates more information than the sum of its parts’. Such measures are also of interest in many domains beyond consciousness, through for example to physics and engineering, where notions of ‘dynamical complexity’ are of more general importance.

Adam Barrett and I have been working towards this challenge for many years, both through approximations of the measure F (‘phi’, central to the various iterations of IIT) and through alternative measures like ‘causal density’. Alongside new work from other groups, there now exist a range of measures of integrated information – yet so far no systematic comparison of how they perform on non-trivial systems.

This is what we provide in our new paper, led by Adam along with Pedro Mediano from Imperial College London.

*

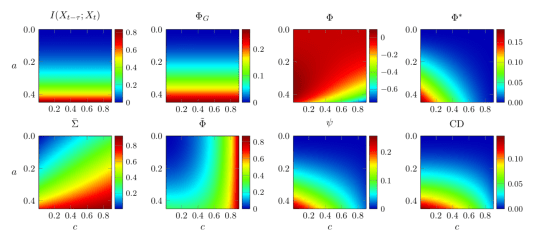

We describe, using a uniform notation, six different candidate measures of integrated information (among which we count the related measure of ‘causal density’). We set out the intuitions behind each, and compare their properties across a series of criteria. We then explore how they behave on a variety of network models, some very simple, others a little bit more complex.

The most striking finding is that the measures all behave very differently – no two measures show consistent agreement across all our analyses. Here’s an example:

Diverse behavior of measures of integrated information. The six measures (plus two control measures) are shown in terms of their behavior on a simple 2-node network animated by autoregressive dynamics.

At first glance this seems worrying for IIT since, ideally, one would want conceptually similar measures to behave in similar ways when applied to empirical test-cases. Indeed, it is worrying if existing measures are used uncritically. However, by rigorously comparing these measures we are able to identify those which better reflect the underlying intuitions of ‘integrated information’, which we believe will be of some help as these measures continue to be developed and refined.

Integrated information, along with related notions of dynamical complexity and emergence, are likely to be important pillars of our emerging understanding of complex dynamics in all sorts of situations – in consciousness research, in neuroscience more generally, and beyond biology altogether. Our new paper provides a firm foundation for the future development of this critical line of research.

*

One important caveat is necessary. We focus on measures that are, by construction, applicable to the empirical, or spontaneous, statistically stationary distribution of a system’s dynamics. This means we depart, by necessity, from the supposedly more fundamental measures of integrated information that feature in the most recent iterations of IIT. These recent versions of the theory appeal to the so-called ‘maximum entropy’ distribution since they are more interested in characterizing the ‘cause-effect structure’ of a system than in saying things about its dynamics. This means we should be very cautious about taking our results to apply to current versions of IIT. But, in recognizing this, we also return to where we started in this post. A major issue for the more recent (and supposedly more fundamental) versions of IIT is that they are extremely challenging to operationalize and therefore to put to an empirical test. Our work on integrated information departs from ‘fundamental’ IIT precisely because we prioritise empirical applicability. This, we think, is a feature, not a bug.

*

All credit for this study to Pedro Mediano and Adam Barrett, who did all the work. As always, I’d like to thank the Dr. Mortimer and Theresa Sackler Foundation, and the Canadian Institute for Advanced Research, Azrieli Programme in Brain, Mind, and Consciousness, for their support. The paper was published in Entropy on Christmas Day, which may explain why some of you might’ve missed it! But it did make the cover, which is nice.

*

Mediano, P.A.M., Seth, A.K., and Barrett, A.B. (2019). Measuring integrated information. Comparison of candidate measures in theory and in simulation. Entropy, 21:17

The short piece below first appeared in Scientific American (Observations) on October 26, 2018. It is a coauthored piece, led by me with contributions from Michael Schartner, Enzo Tagliazucchi, Suresh Muthukumaraswamy, Robin Carhart-Harris, and Adam Barrett. Since its appearance, both Dr. Kastrup and Prof. Kelly have responded. I attach links to their replies after our article, offering a few comments in further response (entirely my own point of view). These comments just offer additional clarifications – I stand fully by everything said in our Sci Am piece.

It’s not easy to strike the right balance when taking new scientific findings to a wider audience. In a recent opinion piece, Bernardo Kastrup and Edward F. Kelly point out that media reporting can fuel misleading interpretations through oversimplification, sometimes abetted by the scientists themselves. Media misinterpretations can be particularly contagious for research areas likely to pique public interest—such as the exciting new investigations of the brain basis of altered conscious experience induced by psychedelic drugs.

Unfortunately, Kastrup and Kelly fall foul of their own critique by misconstruing and oversimplifying the details of the studies they discuss. This leads them towards an anti-materialistic view of consciousness that has nothing to do with the details of the experimental studies—ours or others.

Take, for example, their discussion of our recent study reporting increased neuronal “signal diversity” in the psychedelic state. In this study, we used “Lempel-Ziv” complexity—a standard algorithm used to compress data files—to measure the diversity of brain signals recorded using magnetoencephalography (MEG). Diversity in this sense is related to, though not entirely equivalent to, “randomness.” The data showed widespread increased neuronal signal diversity for three different psychedelics (LSD, psilocybin and ketamine), when compared to a placebo baseline. This was a striking result since previous studies using this measure had only reported reductions in signal diversity, in global states generally thought to mark “decreases” in consciousness, such as (non-REM) sleep and anesthesia.

Media reporting of this finding led to headlines such as “First evidence found that LSD produces ‘higher’ levels of consciousness” (The Independent, April 19, 2017)—playing on an ambiguity between cultural and scientific interpretations of “higher”—and generating just the kind of confusion that Kastrup and Kelly rightly identify as unhelpful.

Unfortunately, Kastrup and Kelly then depart from the details in misleading ways. They suggest that the changes in signal diversity we found are “small,” when it is not magnitude but statistical significance and effect size that matters. Moreover, even small changes to brain dynamics can have large effects on consciousness. And when they compare the changes reported in psychedelic states with those found in sleep and anesthesia, they neglect the important fact that these analyses were conducted on different data types (intracranial data and scalp-level EEG respectively—compared to source-localized MEG for the psychedelic data)—making quantitative comparisons very difficult.

Having set up the notion that the changes we observed were “small,” they then say, “To suggest that brain activity randomness explains psychedelic experiences seems inconsistent with the fact that these experiences can be highly structured and meaningful.” However, neither we nor others claim that “brain activity randomness” explains psychedelic experiences. Our finding of increased signal diversity is part of a larger mission to account for aspects of conscious experience in terms of physiological processes. In our view, higher signal diversity indicates a larger repertoire of physical brain states that very plausibly underpin specific aspects of psychedelic experience, such as a blending of the senses, dissolution of the “ego,” and hyper-animated imagination. As standard functional networks dissolve and reorganize, so too might our perceptual structuring of the world and self.

“In short, a formidable chasm still yawns between the extraordinary richness of psychedelic experiences and the modest alterations in brain activity patterns so far observed.” Here, their misrepresentations are again exposed. To call the alterations modest is to misread the statistics. To claim a “formidable chasm” is to misunderstand the incremental nature of consciousness research (and experimental research generally), to sideline the constraints and subtleties of the relevant analyses and to ignore the insights into psychedelic experience that such analyses provide.

Kastrup and Kelly’s final move is to take this presumed chasm as motivation for questioning “materialist” views, held by most neuroscientists, according to which conscious experiences —and mental states in general—are underpinned by brain states. Our study, like all other studies that explore relations between experiential states and brain states (whether about psychedelics or not), is entirely irrelevant to this metaphysical question.

These are not the only inaccuracies in the piece that deserve redress. For example, their suggestion that decreased “brain activity” is one of the more reliable findings of psychedelic research is incorrect. Aside from the well-known stimulatory effects of psychedelics on the excitatory glutamate system, early reports of decreased brain blood flow under psilocybin have not been well replicated: a subsequent study by the same team using a different protocol and drug kinetics (intravenous LSD) found only modest increases in brain blood flow confined to the visual cortex. In contrast, more informative dynamic measures have revealed more consistent findings, with network disintegration, increases in global connectivity and increased signal diversity/entropy appearing to be particularly reliable outcomes, replicated across studies and study teams.

Consciousness science remains a fragile business, poised precariously between grand ambition, conflicting philosophical worldviews, immediate personal relevance and the messy reality of empirical research. Psychedelic research in particular has its own awkward cultural and historical baggage. Against this background, it’s important to take empirical advances for what they are: yardsticks of iterative, self-correcting progress.

This research is providing a unique window onto mappings between mechanism and phenomenology, but we are just beginning to scratch the surface. At the same time—and perhaps more importantly—psychedelic research is demonstrating an exciting potential for clinical use, for example in alleviating depression, though larger and more rigorous studies are needed to confirm and contextualize the promising early findings.

Kastrup and Kelly are right to guard against overplaying empirical findings by the media. But by misrepresenting the explanatory reach of our findings in order to motivate metaphysical discussions irrelevant to our study, they risk undermining the hard-won legitimacy of a neuroscience of consciousness. Empirical consciousness science, based firmly on materialistic assumptions, is doing just fine. And unlike alternative perspectives that place themselves “beyond physicalism,” it will continue to shed light on one of our deepest mysteries through rigorous application of the scientific method.

You can read Dr. Kastrup’s response here, and Prof. Kelly’s here. In the spirit of constructive clarification I will offer a few additional comments on the parts of the work I was involved in: the signal diversity study and the general interpretation of how empirical work on the brain basis of psychedelic research speaks to metaphysical debates about the nature of consciousness. These comments relate mainly to Prof. Kelly’s critique.

(With respect to Dr. Kastrup’s comments I will simply offer, as he no doubt knows, that relating fMRI BOLD to neural activity, in terms of global baseline and regionally differentiated metabolics, functional neuronal connectivity, and so on – remains an area of extremely active research and rapid methodological innovation.)

1. Prof Kelly notes that we do not provide ‘exact N’s for the data segments we used to compute measures of signal diversity. This is because they varied substantially between drug condition, participant, and analysis method. We do however clearly state that “[a]nalyses were performed using non-overlapping segments of length 2 sec for a total length between 2 min and 10 min of MEG recording per participant and state” (Schartner et al 2017, p.5)” These numbers indeed lead to a total number of segments ranging from ~3,500 to ~27,000 per participant and per state (since we have 90 channels/sources per segment). These large numbers provide stable statistical inference (e.g., by the central limit theorem). Also, as we mentioned (above) the absolute scores on the diversity scale are not as meaningful as effect size and statistical significance. I’d also like to add that in our paper we go to great lengths to establish that our reported diversity changes do not trivially follow from well-known spectral changes in the drug conditions – this is part of the unavoidable computational sophistication of the method, when done properly.

2. When Prof. Kelly says that “relatively simple neuroimaging methods can easily distinguish between wakeful and drowsy states and other commonplace conditions” I do not disagree at all. Our paper was specifically interested in signal diversity as a metric of brain dynamics (and as mentioned above we take care to de-confound our diversity results from spectral changes). Also, we do not claim these diversity changes fully explain the extraordinary phenomenology of psychedelic states. However, I do believe that they contribute helpfully to the incremental empirical project of mapping, in explanatorily satisfying ways, between mechanism and phenomenology. I defend the general approach in this 2016 Aeon article: ‘the “real” problem of consciousness’.

3. I also agree the measures of signal diversity we apply are only part of the story when mapping between experiential richness and brain dynamics. My lab (and others too) have have worked hard on developing empirically adequate measures of ‘neural complexity’, ‘causal density’, and ‘integrated information’ which are theoretically richer – but unfortunately, at least so far, not very robust when applied to actual data – and are substantially more computationally sophisticated. See here for a recent preprint. We have to do what we can with the measures we have, while always striving to generate and validate better measures.

4. I do not buy the claim that near-death-experiences provide an empirical challenge to physicalist neuroscience (as argued by Prof. Kelly). See my previous blog post on this issue (‘the brain’s last hurrah‘).

5. No need to impute me with a bias towards physicalism! I explicitly and happily adopt physicalism as a pragmatic metaphysics for pursuing a (neuro)science of consciousness. I can do this while remaining agnostic about the actual ontological status of consciousness. The problem with many alternative metaphysics – in my view – is that they do not lead to testable propositions. Dr Kastrup and Prof Kelly are of course entirely entitled to their own metaphysics. I was merely objecting to their usage of our psychedelic research in support of their metaphysics, because I think it is entirely irrelevant. I simply do not accept that there are any “evident tensions between physicalist expectations and the experimental results [from psychedelic neuroimaging]”.

6. Finally, we can hopefully all agree on the importance of forestalling, as far as possible, media misinterpretations. This is true whatever one’s metaphysics. And it’s why, when our diversity paper first appeared, I felt compelled to pen an immediate corrective right here in this blog (‘Evidence for a higher state of consciousness? Sort of‘).

After posting, I realized I had not specifically responded to Bernardo’s initial reaction to our Sci Am piece. There is some overlap with the above points, but please anyway allow me to correct this oversight here.

1. Clearing the semantic fog. I hope I have made clear my intended distinction between ‘fully explain’ and ‘incrementally account for.’ Again my Aeon piece elaborates the strategy of refining explanatory mappings between mechanism and phenomenology.

2. Metaphysical claims. Our work is consistent with materialism and is motivated by it, but empirical studies like this are not suited to arbitrate between competing metaphysical positions (unless such positions state that there are no relations at all between brains and conscious experiences). Empirical studies like ours try to account for phenomenological properties in terms of mechanisms – but in doing so there is no need to make claims that one is addressing the (metaphysical) ‘hard problem’ of consciousness. Kastrup and Kelly have written that “the psychedelic brain imaging research discussed here has brought us to a major theoretical decision point as to which framework best fits with all the available data” – where ‘physicalism’ is one among several (metaphysical) ‘frameworks’. I continue to think the research discussed here is irrelevant to this ‘decision point’, unless one is deciding to reject frameworks that postulate no relation between consciousness and the brain. The fact that the research is about psychedelics rather than (for example) psychophysics is neither here nor there.

3. What the researchers fail to address. I do not agree with the premise that there is an inconsistency between the dream state and the psychedelic state in terms of neural evidence. As noted above, measures of brain dynamics and activation are being continuously refined and innovated and it is overly simplistic to characterise the relevant dimensions in terms of gross ‘level of activity’. Also, dreams and psychedelia are different. The point about ‘randomness’ I have addressed already (diversity is not presented as an exhaustive explanation of psychedelic phenomenology).

4. A surprising claim. I respectfully refrain from addressing these points about the MRI/MEG studies since I was not involved with them. This does not mean I condone Bernardo’s comments. I will only repeat that brute measures of increased/decreased brain activity are less informative than more sophisticated measures of neural dynamics and connectivity, and studies are accumulating to more precisely map brain changes in psychedelic states.

5. The issue of statistics. It is not meaningful to compare, quantitatively, ‘magnitudes’ in changes in subjective experience with magnitudes of statistical effect size as applied to (for example) our diversity measures. We made this point already in our Sci Am piece. I find it quite natural to suppose that a massively meaningful change in subjective experience might have a subtle neuronal signature in the brain (and as I have said, diversity/randomness is only a small part of any full ‘explanation’ anyway).

6. A non-sequitur. I do think its misleading to speak of a “formidable chasm” between “the magnitude of the subjective effects of a psychedelic trance and the accompanying physiological changes” for the reasons given in point 5 above.

7. Final thoughts. I indeed hope we can all agree that psychedelic research is interesting, exciting, valuable, evolving, clinically important, and generally highly worthwhile. I hope we can also agree, as mentioned above, that forestalling media misrepresentations is important. On other matters I doubt there will be full agreement between my views (and those of my colleagues) and Bernardo’s and Edward’s. They are certainly entitled to their metaphysics. I simply wish to point out (i) our studies do help build explanatory bridges between neural mechanism and psychedelic phenomenology, and (ii) they do not provide any additional reasons to entertain non-physicalist metaphysics.

And with that, I’m afraid I’ll have to draw a line under this interesting discussion – at least for my involvement. I hope it generates some light amid the heat.

Want to know more about consciousness science? What follows is a personal, subjective collection of resources intended for people without much or any prior training in neuroscience, psychology, or philosophy, who want to gain a foothold in the exciting field of consciousness science Continue reading

So today I’d been planning to write about a new paper from our lab, just out in Neuropsychologia, in which we show how people without synaesthesia can be trained, over a few weeks, to have synaesthesia-like experiences – and that this training induces noticeable changes in their brains. It’s interesting stuff, and I will write about it later, but this morning I happened to read a recent piece by Olivia Goldhill in Quartz with the provocative title: “The idea that everything from spoons to stones are conscious is gaining academic credibility” (Quartz, Jan 27, 2018). This article had come up in a twitter discussion involving my colleague and friend Hakwan Lau about the challenge of maintaining the academic credibility of consciousness science, with Hakwan noting that provocative articles like this don’t often get the pushback they deserve.

So here’s some pushback.

Goldhill’s article is about panpsychism, which is the idea that consciousness is a fundamental property of the universe, present to some degree everywhere and in everything. Her article suggests that this view is becoming increasingly acceptable and accepted in academic circles, as so-called ‘traditional’ approaches (materialism and dualism) continue to struggle. On the contrary, although it’s true that panpsychism is being discussed more frequently and more openly these days, it remains very much a fringe proposition within consciousness science and is not taken seriously by many. Nor need it be, since consciousness science is getting along just fine without it. Let me explain how.

From hard problems to real problems

We should start with philosophy. Goldhill correctly identifies David Chalmers’ famous ‘hard problem of consciousness‘ as a key origin of modern panpsychism. This is bolstered by Chalmers’ own increasing apparent sympathy with this view, as Goldhill’s article makes clear. Put simply, the ‘hard problem’ is about how and why physical interactions of any sort can give rise to conscious experiences. This is indeed a difficult problem, and the apparent unavailability of any current solution is why those who fixate on it might be tempted by the elixir of panpsychism: if consciousness is ‘here, there, and everywhere‘ then there is no longer any hard problem to be solved.

But consciousness science has largely moved on from attempts to address the hard problem (though see IIT, below). This is not a failure, it’s a sign of maturity. Philosophically, the hard problem rests on conceivability arguments such as the possibility of imagining a philosophical ‘zombie’ – a behaviourally and perhaps physically identical version of me, or you, but which lacks any conscious experience, which has no inner universe. Conceivability arguments are generally weak since they often rest on failures of imagination or knowledge, rather than on insights into necessity. For example: the more I know about aerodynamics, the less I can imagine a 787 Dreamliner flying backwards. It cannot be done and such a thing is only ‘conceivable’ through ignorance about how wings work.

In practice, scientists researching consciousness are not spending their time (or their scarce grant money) worrying about conscious spoons, they are getting on with the job of mapping mechanistic properties (of brains, bodies, and environments) onto properties of consciousness. These properties can be described in many different ways, but include – for example – differences between normal wakeful awareness and general anaesthesia; experiences of identifying with and owning a particular body, or distinctions between conscious and unconscious visual perception. If you come to the primary academic meeting on consciousness science – the annual meeting of the Association for the Scientific Study of Consciousness (ASSC) – or read articles either in specialist journals like Neuroscience of Consciousness (I edit this, other journals are available) or in the general academic literature, you’ll find a wealth of work like this and very little – almost nothing – on panpsychism. You’ll find debates on the best way to test whether prefrontal cortex is involved in visual metacognition – but you won’t find any experiments on whether stones are aware. This, again, is maturity, not stagnation. It is also worth pointing out that consciousness science is having increasing impact in medicine, whether through improved methods for detecting residual awareness following brain injury, or via enhanced understanding of the mechanisms underlying psychiatric illness. Thinking about conscious spoons just doesn’t cut it in this regard.

A standard objection at this point is that empirical work touted as being about consciousness science is often about something else: perhaps memory, attention, or visual perception. Yes, some work in consciousness science may be criticized this way, but it is not generally the case. To the extent that the explanatory target of a study encompasses phenomenological properties, or differences between conscious states (e.g., dreamless sleep versus wakeful rest), it is about consciousness. And of course, consciousness is not independent of other cognitive and perceptual processes – so empirical work that focuses on visual perception can be relevant to consciousness even if it does not explicitly contrast conscious and unconscious states.

The next objection goes like this: OK, you may be able to account for properties of consciousness in terms of underlying mechanisms, but this is never going to explain why consciousness is part of the universe in the first place – it is never going to solve the hard problem. Therefore consciousness science is failing. There are two responses to this.

First, wait and see (and ideally do). By building increasingly sophisticated bridges between mechanism and phenomenology, the apparent mystery of the hard problem may dissolve. Certainly, if we stick with simplistic ‘explanations’ – for instance by associating consciousness simply with activity in (for example) the prefrontal cortex, everything may remain mysterious. But if we can explain (for example) the phenomenology of peripheral vision in terms of neurally-encoded predictions of expected visual uncertainty, perhaps we are getting somewhere. It is unwise to pronounce the insufficiency of mechanistic accounts of some putatively mysterious phenomenon before such mechanistic accounts have been fully developed. This is one reason why frameworks like predictive processing are exciting – they provide explanatorily powerful, computationally explicit, and empirically predictive concepts which can help link phenomenology and mechanism. Such concepts can help move beyond correlation towards explanation in consciousness science, and as we move further along this road the hard problem may lose its lustre.

Second, people often seem to expect more from a science of consciousness than they would ask of other scientific explanations. As long as we can formulate explanatorily rich relations between physical mechanisms and phenomenological properties, and as long as these relations generate empirically testable predictions which stand up in the lab (and in the wild), we are doing just fine. Riding behind many criticisms of current consciousness science are unstated intuitions that a mechanistic account of consciousness should be somehow intuitively satisfying, or even that it must allow some kind of instantiation of consciousness in an arbitrary machine. We don’t make these requirements in other areas of science, and indeed the very fact that we instantiate phenomenological properties ourselves, might mean that a scientifically satisfactory account of consciousness will never generate the intuitive sensation of ‘ah yes, this is right, it has to be this way’. (Thomas Metzinger makes this point nicely in a recent conversation with Sam Harris.)

Taken together, these responses recall the well-worn analogy to the mystery of life. Not so long ago, scientists thought that the property of ‘being alive’ could never be explained by physics or chemistry. That life had to be more than mere ‘mechanism’. But as biologists got on with the job of accounting for the properties of life in terms of physics and chemistry, the basic mystery of the ontological status of life faded away and people no longer felt the need to appeal to vitalistic concepts like ‘elan vital’. Now of course this analogy is imperfect, and from our current vantage it is impossible to say how closely it will stand up over time. Consciousness and life are not the same (though they may be more closely linked than people tend to think – another story!). But the basic point remains: instead of focusing on a possibly illusory big mystery – and thereby falling for the temptations of easy big solutions like panpsychism – the best strategy is to divide and conquer. Identify properties and account for them, and repeat. Chalmers’ himself describes something like this strategy when he talks about the ‘mapping problem’, and with tongue-somewhat-in-cheek I’ve called it ‘the real problem of consciousness‘.

The lure of integrated information theory

A major boost for modern panpsychism has come from Giulio Tononi’s much discussed – and fascinating – integrated information theory of consciousness (IIT). This is a formal mathematical theory which attempts to derive constraints on the mechanisms of consciousness from axioms about phenomenology. It’s a complex theory (and apparently getting more complex all the time) but the relevance for panpsychism is straightforward. On IIT, any mechanism that integrates information in the right way exhibits consciousness to some degree. And the ability to integrate information is very general, since it depends on only the cause-effect structure of a system.

Tononi actually goes further than this, in a crucial but subtle way. For him, the (integrated) information that counts is based not only what a system has done (ie., what states it has been in), but on what a system could do (i.e., what states it could be in, even if has never or will never occupy these states). Technically, this is the difference between the empirical distribution of a system and its maximum entropy distribution. This feature of IIT not only makes it hard (usually impossible) to calculate for nontrivial systems, it pushes further towards panpsychism because it implies an ontological status for certain forms of information – much like John Wheeler’s ‘it from bit‘. If (integrated) information is real (and therefore more-or-less everywhere), and if consciousness is based on (integrated) information, then consciousness is also more-or-less everywhere, thus panpsychism.

But this is not the only way to formulate IIT. Several years ago, Adam Barrett and I formulated a measure of integrated information which depends only on the empirical distribution of a system, and now many competing measures exist. These measures can be applied more easily in practice, and they do not directly imply panpsychism because they can be interpreted as explanatory bridges between mechanism and phenomenology (in the ‘real problem’ sense), rather than as claims about what consciousness actually is. So when Goldhill writes that IIT “shares the panpsychist view that physical matter has innate conscious experience” this is only true for the strong version of the theory articulated by Tononi himself. Other views are possible, and more empirically productive.

Back to science

This leads us to the main problem with panpsychism. It’s not that it sounds crazy, it’s that it cannot be tested. It does not lead to any feasible programme of experimentation. Progress in scientific understanding requires experiments and testability. Given this, it’s curious that Goldhill introduces us to Arthur Eddington, the physicist who experimentally confirmed Einstein’s (totally crazy-sounding) theory of general relativity. Eddington’s immense contribution to experimental physics should not give credence to his views on panpsychism, it should instead remind us of the essential imperative of formulating testable theories, however difficult such tests might be to carry out. (Modern physics is of course now facing a similar testability crisis with string theory.) And outlandish speculations about how quantum entanglement might lead to universe-wide consciousness have no place whatsoever in a rigorous and empirically grounded science of consciousness.

I can’t finish this post without noting that the current attention to panpsychism, especially in the media, has a lot to do with the views of some particularly influential figures in the field: Chalmers and Tononi, but also Christof Koch, whose early work with Francis Crick was fundamental in the rehabilitation of consciousness science in the late 1990s and who continues to be a major figure in the field. These people are all incredibly smart and have made extremely important contributions within consciousness science and beyond. I have learned a great deal from each, and I owe them intellectual debts I will never be able to repay. Having said that, their views on panpsychism are firmly in the minority and should not be over-weighted simply because of their historical contributions and current prominence. Whether there is something about having made such influential contributions that leads to a tendency to adopt countercultural (and difficult to test) views later on – well that’s for another day and another writer.

At the end of her piece, Goldhill quotes Chalmers quoting the philosopher John Perry who says: “If you think about consciousness long enough, you either become a panpsychist or you go into administration.” Perhaps the problem lies in only thinking. We should instead complement only thinking with the challenging empirical work of explaining properties of consciousness in terms of biophysical mechanisms. Then we can say: If you work on consciousness long enough, you either become a neuroscientist or you become a panpsychist. I know where I’d rather be – with my many colleagues who are not worrying about conscious spoons but who are trying, and little-by-little succeeding, in unravelling the complex biophysical mechanisms that shape our subjective experiences of world and self. And now it’s high time I got back to that paper on training synaesthesia.

(For more general discussions about consciousness science, where it’s at and where we’re going, have a listen to my recent conversation with Sam Harris. Make sure you have time for it though, it clocks in at over three hours …)