Benedict Cumberbatch as Alan Turing in The Imitation Game. (Spoiler alert: this post reveals some plot details.)

World War Two was won not just with tanks, guns, and planes, but by a crack team of code-breakers led by the brilliant and ultimately tragic figure of Alan Turing. This is the story as told in The Imitation Game, a beautifully shot and hugely popular film which nonetheless left me nursing a deep sense of missed opportunity. True, Benedict Cumberbatch is brilliant, spicing his superb Holmes with a dash of the Russell Crowe’s John Nash (A Beautiful Mind) to propel geek rapture into yet higher orbits. (See also Eddie Redmayne and Stephen Hawking.)

The rest was not so good. The clunky acting might reflect a screenplay desperate to humanize and popularize what was fundamentally a triumph of the intellect. But what got to me most was the treatment of Turing himself. On one hand there is the perhaps cinematically necessary canonisation of individual genius, sweeping aside so much important context. On the other there is the saccharin treatment of Turing’s open homosexuality (with compensatory boosting of Keira Knightley’s Joan Clarke) and the egregious scenes in which he stands accused of both treason and cowardice by association with Soviet spy John Cairncross, whom he likely never met. The requisite need for a bad guy does disservice also to Turing’s Bletchley Park boss Alastair Denniston, who while a product of old-school classics-inspired cryptography nonetheless recognized and supported Turing and his crew. Historical jiggery-pokery is of course to be expected in any mass-market biopic, but the story as told in The Imitation Game becomes much less interesting as a result.

I studied at King’s College, Cambridge, Turing’s academic home and also where I first encountered the basics of modern day computer science and artificial intelligence (AI). By all accounts Turing was a genius, laying the foundations for these disciplines but also for other areas of science, which – like AI – didn’t even exist in his time. His theories of morphogenesis presaged contemporary developmental biology, explaining how leopards get their spots. He was a pioneer of cybernetics, an inspired amalgam of engineering and biology that after many years in the academic hinterland is once again galvanising our understanding of how minds and brains work, and what they are for. One can only wonder what more he would have done, had he lived.

There is a breathless moment in the film where Joan Clarke (or poor spy-hungry and historically-unsupported Detective Nock, I can’t remember) wonders whether Turing, in cracking Enigma, has built his ‘universal machine’. This references Turing’s most influential intellectual breakthrough, his conceptual design for a machine that was not only programmable but re-programmable, that could execute any algorithm, any computational process.

The Universal Turing Machine formed the blueprint for modern-day computers, but the machine that broke Enigma was no such thing. The ‘Bombe’, as it was known, was based on Polish prototypes (the bomba kryptologiczna) and was co-designed with Gordon Welchman whose critical ‘diagonal board’ innovation is in the film attributed to the suave Hugh Alexander (Welchman doesn’t appear at all). Far from being a universal computer the Bombe was designed for a single specific purpose – to rapidly run through as many settings of the Enigma machine as possible.

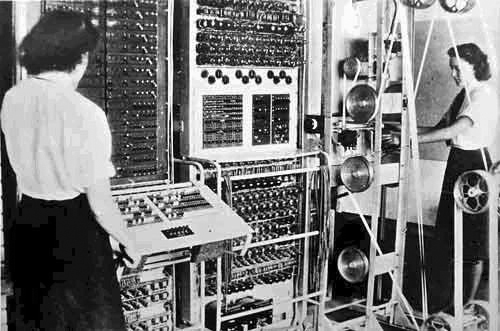

A working rebuilt Bombe at Bletchley Park, containing 36 Enigma equivalents. The (larger) Bombe in The Imitation Game was a high point – a beautiful piece of historical reconstruction.

The Bombe is half the story of Enigma. The other half is pure cryptographic catnip. Even with a working Bombe the number of possible machine settings to be searched each day (the Germans changed all the settings at midnight) was just too large. The code-breakers needed a way to limit the combinations to be tested. And here Turing and his team inadvertently pioneered the principles of modern-day ‘Bayesian’ machine learning, by using prior assumptions to constrain possible mappings between a cipher and its translation. For Enigma, the breakthroughs came on realizing that no letter could encode itself, and that German operators often used the same phrases in repeated messages (“Heil Hitler!”). Hugh Alexander, diagonal boards aside, was supremely talented at this process which Turing called ‘banburismus’, on account of having to get printed ‘message cards’ from nearby Banbury.

In this way the Bletchley code-breakers combined extraordinary engineering prowess with freewheeling intellectual athleticism, to find a testable range of Enigma settings, each and every day, which were then run through the Bombe until a match was found.

Though it gave the allies a decisive advantage, the Bombe was not the first computer, not the first ‘digital brain’. This honour belongs to Colossus, also built at Bletchley Park, and based on Turing’s principles, but constructed mainly by Tommy Flowers, Jack Good, and Bill Tutte. Colossus was designed to break the even more encrypted communications the Germans used later in the war: the Tunny cipher. After the war the intense secrecy surrounding Bletchley Park meant that all Colossi (and Bombi) were dismantled or hidden away, depriving Turing, Flowers – and many others – of recognition and setting back the computer age by years. It amazes me that full details about Colussus were only released in 2000.

The Imitation Game of the title is a nod to Turing’s most widely known idea: a pragmatic answer to the philosophically challenging and possibly absurd question, “can machines think”. In one version of what is now known as the Turing Test, a human judge interacts with two players – another human and a machine – and must decide which is which. Interactions are limited to disembodied exchanges of pieces of text, and a candidate machine passes the test when the judge consistently fails to distinguish the one from the other. It is unfortunate but in keeping with the screenplay that Turing’s code-breaking had little to do with his eponymous test.

It is completely understandable that films simplify and rearrange complex historical events in order to generate widespread appeal. But the Imitation Game focuses so much on a distorted narrative of Turing’s personal life that the other story – a thrilling ‘band of brothers’ tale of winning a war by inventing the modern world – is pushed out into the wings. The assumption is that none of this puts bums on seats. But who knows, there might be more to geek-chic than meets the eye.