Image from 30 Second Brain, Ivy Press, available at all good booksellers.

Neuroscientists long appreciated that people can make accurate decisions without knowing they are doing so. This is particularly impressive in blindsight: a phenomenon where people with damage to the visual parts of their brain can still make accurate visual discriminations while claiming to not see anything. But even in normal life it is quite possible to make good decisions without having reliable insight into whether you are right or wrong.

In a paper published this week in Psychological Science, our research group – led by Ryan Scott – has for the first time shown the opposite phenomenon: blind insight. This is the situation in which people know whether or not they’ve made accurate decisions, even though they can’t make decisions accurately!

This is important because it changes how we think about metacognition. Metacognition, strictly speaking, is ‘knowing about knowing’. When we make a perceptual judgment, or a decision of any kind, we typically have some degree of insight into whether our decision was correct or not. This is metacognition, which in experiments is usually measured by asking people how confident they are in a previous decision. Good metacognitive performance is indicated by high correlations between confidence and accuracy, which can be quantified in various ways.

Most explanations of metacognition assume that metacognitive judgements are based on the same information as the original (‘first-order’) decision. For example, if you are asked to decide whether a dim light was present or not, you might make a (first-order) judgment based on signals flowing from your eyes to your brain. Perhaps your brain sets a threshold below which you will say ‘No’ and above which you will say ‘Yes’. Metacognitive judgments are typically assumed to work on the same data. If you are asked whether you were guessing or were confident, maybe you will set additional thresholds a bit further apart. The idea is that your brain may need more sensory evidence to be confident in judging that a dim light was in fact present, than when merely guessing that it was.

This way of looking at things is formalized by signal detection theory (SDT). The nice thing about SDT is that it can give quantitative mathematical expressions for how well a person can make both first-order and metacognitive judgements, in ways which are not affected by individual biases to say ‘yes’ or ‘no’, or ‘guess’ versus ‘confident’. (The situation is a bit trickier for metacognitive confidence judgements but we can set these details aside for now: see here for the gory details). A simple schematic of SDT is shown below.

Signal detection theory. The ‘signal’ refers to sensory evidence and the curves show hypothetical probability distributions for stimulus present (solid line) and stimulus absent (dashed line). If a stimulus (e.g., a dim light) is present, then the sensory signal is likely to be stronger (higher) – but because sensory systems are assumed to be noisy (probabilistic), some signal is likely even when there is no stimulus. The difficulty of the decision is shown by the overlap of the distributions. The best strategy for the brain is to place a single ‘decision criterion’ midway between the peaks of the two distributions, and to say ‘present’ for any signal above this threshold, and ‘absent’ for any signal below. This determines the ‘first order decision’. Metacognitive judgements are then specified by additional ‘confidence thresholds’ which bracket the decision criterion. If the signal lies in between the two confidence thresholds, the metacognitive response is ‘guess’; if it lies to the two extremes, the metacognitive response is ‘confident’. The mathematics of SDT allow researchers to calculate ‘bias free’ measures of how well people can make both first-order and metacognitive decisions (these are called ‘d-primes’). As well as providing a method for quantifying decision making performance, the framework is also frequently assumed to say something about what the brain is actually doing when it is making these decisions. It is this last assumption that our present work challenges.

On SDT it is easy to see that one can make above-chance first order decisions while displaying low or no metacognition. One way to do this would be to set your metacognitive thresholds very far apart, so that you are always guessing. But there is no way, on this theory (without making various weird assumptions), that you could be at chance in your first-order decisions, yet above chance in your metacognitive judgements about these decisions.

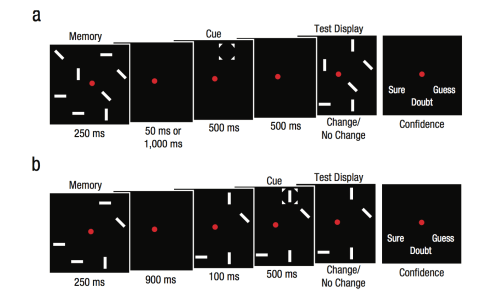

Surprisingly, until now, no-one had actually checked to see whether this could happen in practice. This is exactly what we did, and this is exactly what we found. We analysed a large amount of data from a paradigm called artificial grammar learning, which is a workhorse in psychological laboratories for studying unconscious learning and decision-making. In artificial grammar learning people are shown strings of letters and have to decide whether each string belongs to ‘grammar A’ or ‘grammar B’. Each grammar is just an arbitrary set of rules determining allowable patterns of letters. Over time, most people can learn to classify letter strings at better than chance. However, over a large sample, there will always be some people that can’t: for these unfortunates, their first-order performance remains at ~50% (in SDT terms they have a d-prime not different from zero).

Artificial grammar learning. Two rule sets (shown on the left) determine which letter strings belong to ‘grammar A’ or ‘grammar B’. Participants are first shown examples of strings generated by one or the other grammar (training). Importantly, they are not told about the grammatical rules, and in most cases they remain unaware of them. Nonetheless, after some training they are able to successfully (i.e., above chance) classify novel letter strings appropriately (testing).

Crucially, subjects in our experiments were asked to make confidence judgments along with their first-order grammaticality judgments. Focusing on those subjects who remained at chance in their first-order judgements, we found that they still showed above-chance metacognition. That is, they were more likely to be confident when they were (by chance) right, than when they were (by chance) wrong. We call this novel finding blind insight.

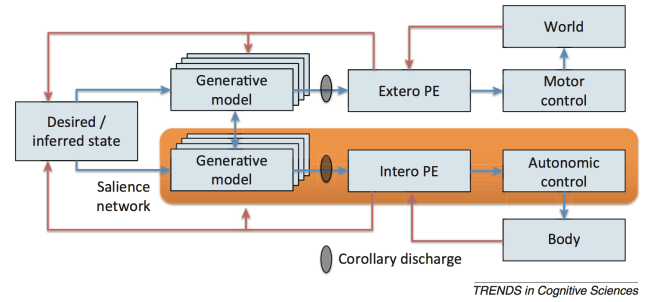

The discovery of blind insight changes the way we think about decision-making. Our results show that theoretical frameworks based on SDT are, at the very least, incomplete. Metacognitive performance during blind insight cannot be explained by simply setting different thresholds on a single underlying signal. Additional information, or substantially different transformations of the first-order signal, are needed. Exactly what is going on remains an open question. Several possible mechanisms could account for our results. One exciting possibility appeals to predictive processing, which is the increasingly influential idea that perception depends on top-down predictions about the causes of sensory signals. If top-down influences are also involved in metacognition, they could carry the additional information needed for blind insight. This would mean that metacognition, like perception, is best understood as a process of probabilistic inference.

In predictive processing theories of brain function, perception depends on top-down predictions (blue) about the causes of sensory signals. Sensory signals carry ‘prediction errors’ (magenta) which update top-down predictions according to principles of Bayesian inference. Maybe a similar process underlies metacognition. Image from 30 Second Brain, Ivy Press.

This brings us to consciousness (of course). Metacognitive judgments are often used as a proxy for consciousness, on the logic that confident decisions are assumed to be based on conscious experiences of the signal (e.g., the dim light was consciously seen), whereas guesses signify that the signal was processed only unconsciously. If metacognition involves top-down inference, this raises the intriguing possibility that metacognitive judgments actually give rise to conscious experiences, rather than just provide a means for reporting them. While speculative, this idea fits neatly with the framework of predictive processing which says that top-down influences are critical in shaping the nature of perceptual contents.

The discovery of blindsight many years ago has substantially changed the way we think about vision. Our new finding of blind insight may similarly change the way we think about metacognition, and about consciousness too.

The paper is published open access (i.e. free!) in Psychological Science. The authors were Ryan Scott, Zoltan Dienes, Adam Barrett, Daniel Bor, and Anil K Seth. There are also accompanying press releases and coverage:

Sussex study reveals how ‘blind insight’ confounds logic. (University of Sussex, 13/11/2014)

People show ‘blind insight’ into decision making performance (Association for Psychological Science, 13/11/2014)